Why Most Data Modernization Programs Miss Their Deadlines

Key takeaways

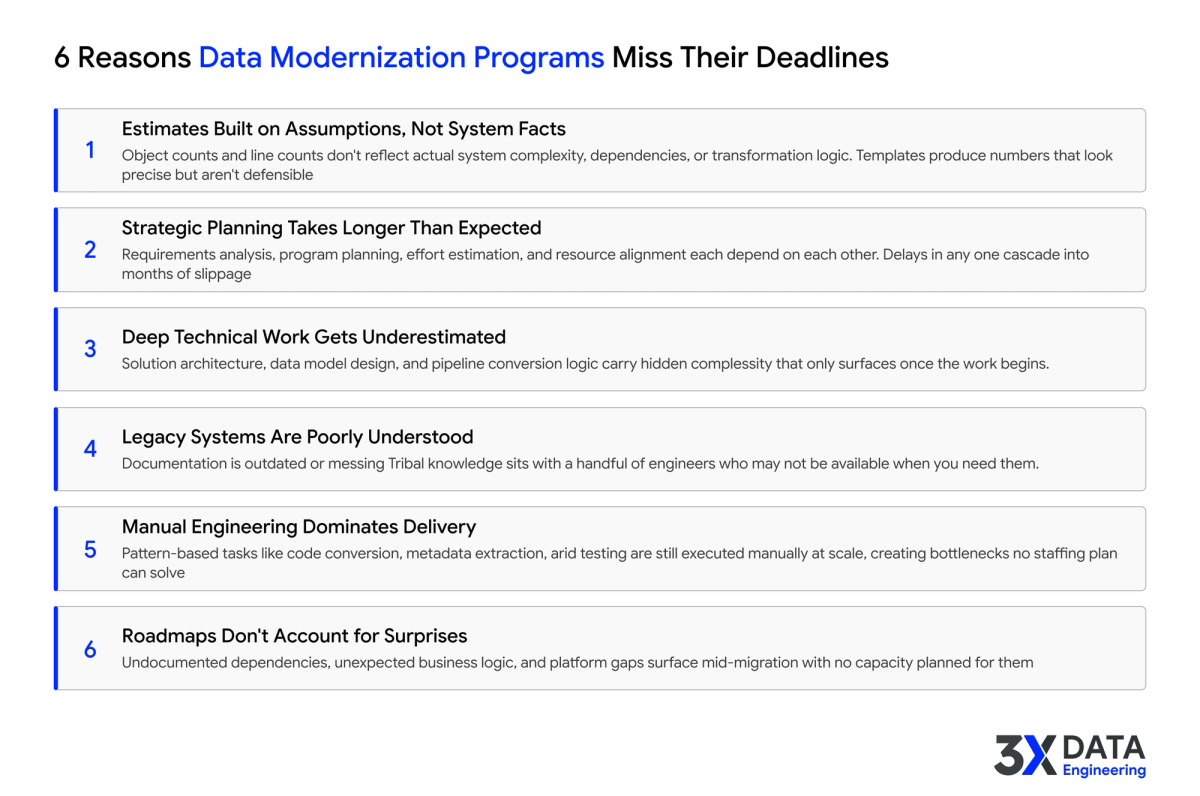

- Programs miss deadlines for structural reasons, not effort reasons. Adding people does not fix structural problems.

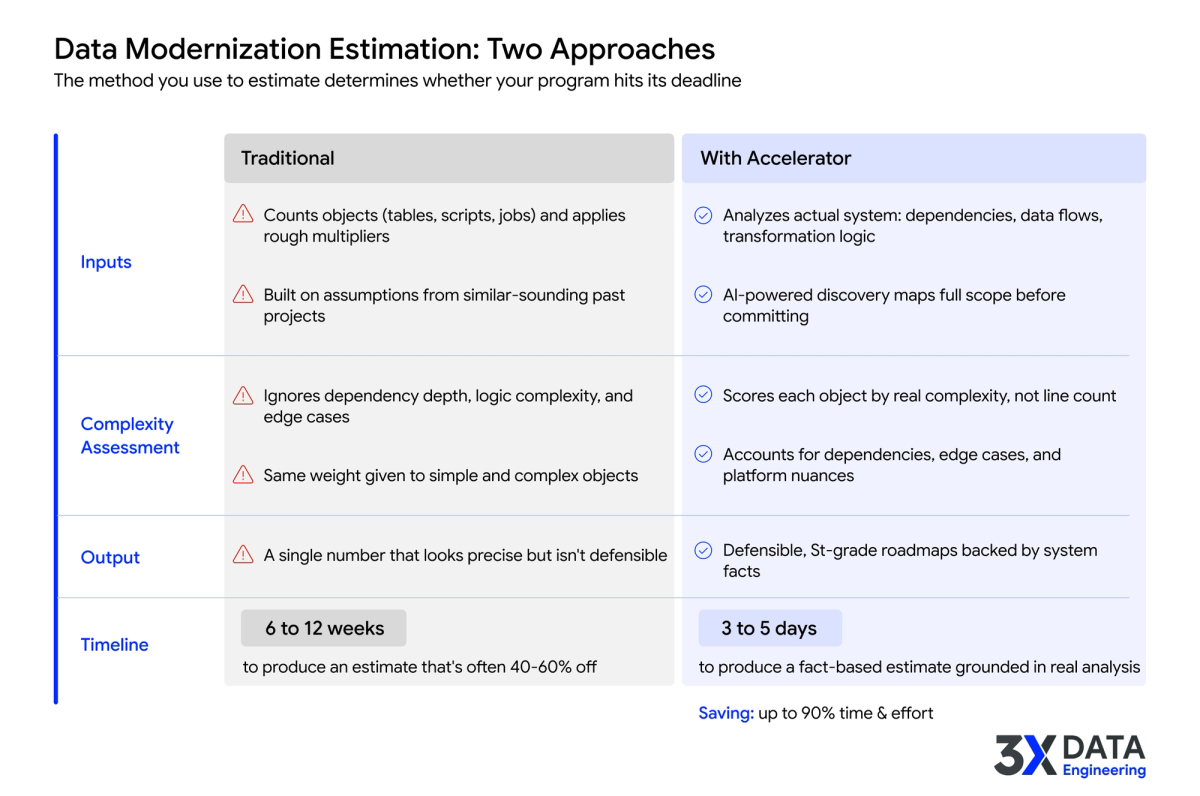

- Estimation based on average-time-per-object carries a 40 to 60 percent error margin from day one.

- Discovery and assessment take longer than planned in 80+ percent of programs that miss deadlines.

- Six specific structural fixes address most of the common failure modes.

Reason 1: Estimation based on object counts, not complexity

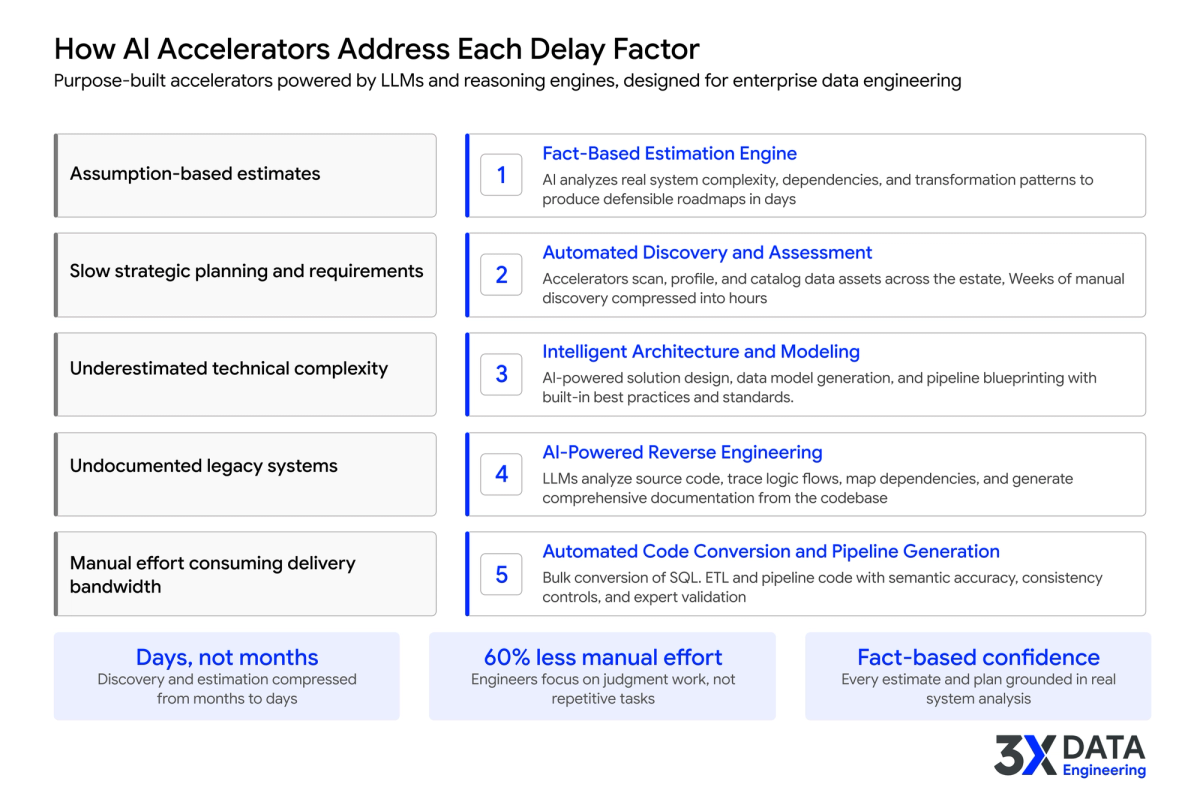

The single most common cause of timeline overruns. A 50-line standard stored procedure and a 500-line procedure with nested cursors are not the same conversion effort. Estimation that treats them as roughly equivalent bakes a 40 to 60 percent error into the plan.

Fix. Object-level complexity scoring before commitment. Programs that commit to a timeline before completing complexity scoring are committing to a number with a 40 to 60 percent error margin.

Reason 2: Discovery treated as a sequential precondition

Traditional discovery is interview-based, document-based, and sequential. Each week of discovery delays the start of execution. Programs that stack four to twelve weeks of discovery in front of execution lose those weeks before they ever start moving.

Fix. Source-connected discovery. Read-only access to live systems. Discovery in days, not weeks. Execution starts on the second or third week, not the third month.

Reason 3: Architecture decisions stalled in committee

Major architecture decisions (Warehouse versus Lakehouse, workspace boundaries, governance model) often stall in cross-functional review. Each delay is a week. Three delays is a month.

Fix. Named architecture owners with explicit decision authority. Architecture decisions get reviewed by a senior architect and signed off in days, not weeks. Committee review for major decisions only, not for every workstream choice.

Reason 4: Testing left to the end

Programs that defer testing until conversion completes discover broken logic in the final third of the program, when fixing it triggers downstream rework. Each rework cycle costs weeks.

Fix. Automated reconciliation per migration wave. Source and target are compared on each wave. Discrepancies surface immediately, not three months later.

Reason 5: Senior engineers spending time on volume work

Senior engineers in most programs spend 60 to 80 percent of their time on tasks that do not require their judgment. Code conversion volume, documentation, source profiling, technical specifications. The senior engineering hour is the most constrained resource on the program; spending it on volume work is the single largest source of delay.

Fix. AI acceleration on volume tasks. Senior engineers review and approve, but do not author. The split is 60 to 75 percent AI volume work, 25 to 40 percent engineer judgment work.

Reason 6: Scope creep through legacy report inheritance

Programs often inherit every legacy report and dashboard, then build their target platform around them. Many of those reports are not used. Building the target around dead reports adds scope without adding value.

Fix. Usage analysis before scope freeze. Reports without usage in the past 90 days are candidates for retirement. Decisions made by the business owner, not the data team.

Plan your modernization with a fact-based blueprint

If you are working on a data modernization program at risk of slipping, the next practical step is a fixed-price Modernization Assessment. Source-connected discovery, complexity scoring, target architecture, effort estimation, and bulk-converted sample code, delivered as a Modernization Canvas in 8 business days. No long discovery, no procurement cycle, Director-level signing authority.